Today’s most advanced AI systems are capable of impressive feats ranging from directing cars through city streets to writing human-like prose. They do, however, share a bottleneck: hardware. Developing cutting-edge systems frequently necessitates a massive amount of computing power. DeepMind’s protein structure-predicting AlphaFold, for example, required a cluster of hundreds of GPUs. To emphasize the difficulty, one source estimates that developing OpenAI’s language-generating GPT-3 system on a single GPU would have taken 355 years.

New techniques and chips designed to speed up certain aspects of AI system development promise (and have already reduced) hardware requirements. However, developing these techniques necessitates expertise, which can be difficult for smaller businesses to obtain. At least, that’s what the co-founders of infrastructure startup Exafunction, Varun Mohan and Douglas Chen, believe. Exafunction, which emerged from stealth today, is developing a platform to abstract away the complexities of using hardware to train AI systems.

“Improvements [in AI] are frequently underpinned by significant increases in… computational complexity.” As a result, companies are being forced to make large investments in hardware in order to reap the benefits of deep learning. This is difficult because technology is improving so quickly, and the workload size quickly increases as deep learning proves its value within a company,” Chen told TechCrunch via email. “The specialized accelerator chips required to run deep learning computations at scale are in short supply.” Efficient use of these chips also necessitates esoteric knowledge not commonly found among deep learning practitioners.”

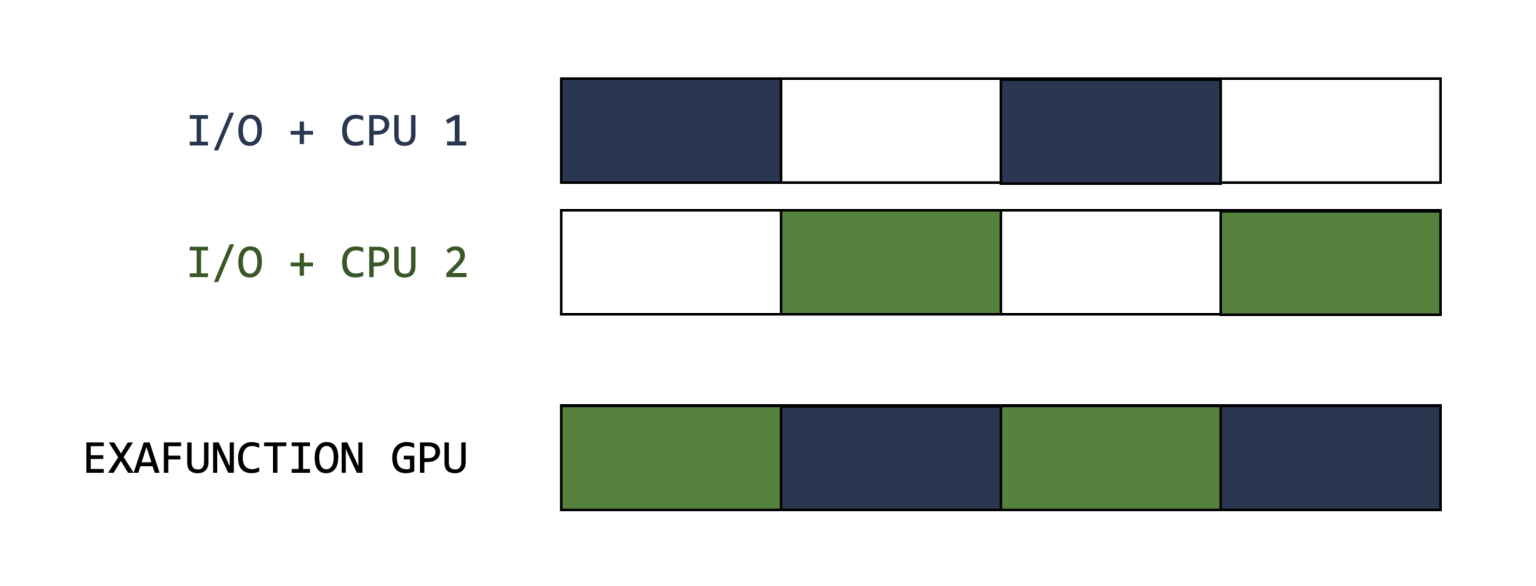

Exafunction has raised $28 million in venture capital, $25 million of which came from a Series A round led by Greenoaks with participation from Founders Fund, to address what it sees as a symptom of the AI expertise shortage: idle hardware. GPUs and the aforementioned specialized chips used to “train” AI systems — that is, to feed data to the systems so that they can make predictions — are frequently underutilized. Because some AI workloads are completed so quickly, they sit idle while other components of the hardware stack, such as processors and memory, catch up.

According to Lukas Biewald, the founder of AI development platform Weights and Biases, nearly a third of his company’s customers have less than 15% GPU utilization. Meanwhile, only 17 percent of companies said they were able to achieve “high utilization” of their AI resources in a 2021 survey commissioned by Run: AI, which competes with Exafunction, while 22 percent said their infrastructure mostly sits idle.

The expenses mount up. As of October 2021, 38 percent of companies had an annual budget for AI infrastructure — including hardware, software, and cloud fees — that exceeded $1 million, according to Run: AI. It is estimated that OpenAI spent $4.6 million training GPT-3.

“Most deep learning companies go into business so they can focus on their core technology, not so they can spend their time and bandwidth worrying about optimizing resources,” Mohan explained via email. “We believe there is no meaningful competitor that addresses the problem that we’re focusing on, which is abstracting away the challenges of managing accelerated hardware like GPUs while delivering superior performance to customers.”

“As the complexity and demandingness of our deep learning workloads [at Nuro] grew, it became clear that there was no clear solution to scale our hardware accordingly,” Mohan explained. “Simulation is a strange problem. Paradoxically, as your software improves, you may find that you need to simulate even more iterations to find corner cases. The better your product, the more difficult it is to find flaws. We found out the hard way how difficult this was and spent thousands of engineering hours trying to squeeze more performance out of the resources we had.”

Customers of Exafunction can use the company’s managed service or deploy Exafunction’s software in a Kubernetes cluster. When available, the technology dynamically allocates resources, shifting computation to “cost-effective hardware” such as spot instances.

When asked about the Exafunction platform’s inner workings, Mohan and Chen declined, preferring to keep those details under wraps for the time being. However, they explained that, at a high level, Exafunction uses virtualization to run AI workloads even when hardware availability is limited, ostensibly leading to higher utilization rates while lowering costs.

Exafunction’s reluctance to reveal details about its technology, such as whether it supports cloud-hosted accelerator chips like Google’s tensor processing units (TPUs), is cause for concern. To assuage concerns, Mohan stated that Exafunction is already managing GPUs for “some of the most sophisticated autonomous vehicle companies and organizations at the cutting edge of computer vision.”

“Exafunction provides a platform that decouples workloads from acceleration hardware such as GPUs, ensuring maximally efficient utilization — lowering costs, accelerating performance, and allowing businesses to fully benefit from hardware…” “[The] platform enables teams to consolidate their work on a single platform, eliminating the challenges of stitching together a disparate set of software libraries,” he added. “We anticipate that [Exafunction’s product] will have a profound market impact, doing for deep learning what AWS did for cloud computing.”

Market expansion

Exafunction’s Mohan may have grandiose plans, but the startup isn’t the only one applying the concept of “intelligent” infrastructure allocation to AI workloads. Grid.ai provides software that enables data scientists to train AI models across hardware in parallel, in addition to Run: AI, whose product also creates an abstraction layer to optimize AI workloads. Nvidia, for its part, sells AI Enterprise, a set of tools and frameworks that enable businesses to virtualize AI workloads on Nvidia-certified servers.

Despite the competition, Mohan and Chen see a massive addressable market. In their conversation, they positioned Exafunction’s subscription-based platform not only as a way to lower barriers to AI development but also as a way for companies dealing with supply chain constraints to “unlock more value” from the hardware on hand. (GPUs have become a hot commodity in recent years for a variety of reasons.) There’s always the cloud, but as Mohan and Chen point out, it can raise costs. According to one estimate, training an AI model on-premises hardware is up to 6.5x cheaper than the least expensive cloud-based alternative.

“While deep learning has virtually limitless applications,” Mohan said, “two of the ones we’re most excited about are autonomous vehicle simulation and video inference at scale.” “In the autonomous vehicle industry, simulation is at the heart of all software development and validation… Deep learning has also resulted in remarkable progress in automated video processing, with applications in a wide range of industries. [However], despite the fact that GPUs are critical to autonomous vehicle companies, their hardware is frequently underutilized, owing to their high cost and scarcity. [Computer vision applications are] also computationally demanding, [because] each new video stream effectively represents a firehose of data, with each camera producing millions of frames per day.”

According to Mohan and Chen, the Series A funding will be used to expand Exafunction’s team and “deepen” the product. In addition, the company will invest in optimizing AI system runtimes “for the most latency-sensitive applications” (e.g., autonomous driving and computer vision).

“While we are currently a strong and nimble team focused primarily on engineering,” Mohan said, “We expect to rapidly grow the size and capabilities of our org in 2022.” “It is clear across virtually every industry that as workloads become more complex (and an increasing number of companies wish to leverage deep-learning insights), demand for computes vastly exceeds [supply].” While the pandemic has highlighted these concerns, this phenomenon, and its associated bottlenecks, is expected to become more acute in the coming years, particularly as cutting-edge models become exponentially more demanding.”